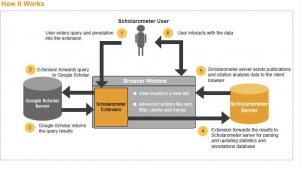

In the Quamen document I was struck by the statement that syntactic web searches are fundamentally binary. This should not have been revelatory, but it really connected with a post-deconstruction era understanding of language—or rather didn’t connect. As we develop more sophisticated ways of approaching the data that we normally just read, it’s both maddening and invigorating to confront a familiar problem. But semantic web databases represent an answer to the binary limitation by introducing a range of predicates linking subject and object rather than just offering an attribute connected to an entity.

How I understand the relationship between traditional databases and semantic web databases reaches back to my post about databases a few weeks ago in which I looked for similarities between documents and databases. I believe the similarity to be found in the ideas of inclusion and exclusion—in a document, each word introduces a range of possibilities for the next word and so on and so forth while a databases includes or excludes based on a particular attribute. After a query is levied at the daaset, human readers can then begin to interpret based on a set of inclusions and exclusions. This model, however, rests on a binary system—each query a matic of the presence or absence of a particular attribute—i.e. “database, give me a list of all texts that are novels and tell a strictly linear narrative.” There are four possibilities: (Yes, Yes)—the dataset that is retrieved or included—(no, yes), (yes, no), and (no, no). each ordered pair represetnts a binary opposition through which I can include or exclude the entity. Insofar as I understand them, however, semantic web databases break free of this system and can engage more nuanced queries.

Triplestore databases are built on a similar set of rules to traditional relational databases, but they can represent the data with much more fidelity. I wonder how far this fidelity goes, however, it seems that we could be constructing another Borges tale, we are constructing a database that represents the world so well, that the barrier between the thing and the representation begin to fade. Further, is there any chance that we are moving toward a systemic understanding of the universe which will require that system to describe itself? I am really just thinking of the theories coined by Kurt Gödel which held that systems could reach a level of complexity in which the system could describe itself. He theorized that once this happens, the system basically falls apart—true systemic fidelity is untenable.

I do wonder, however, about a phrase that came up repeatedly in the Bizer piece. Bizer describes linked data as “a web of things in the world, described by data on the web,” which made me think about a number of issues regarding the relationships between objects in the world, and the world’s relationship to representation. It seems that we want to draw back the curtain of this world to see the raw data of existence, but this process is predicated on a human perspective. Is the data that is on the web in Bizer’s statement data about the world, or data about us? I think its likely that its split between these two possibilities, but how well can an SWD tell the difference?